End-to-End ML Platform on AWS (MLflow + SageMaker)

Production-grade ML platform with experiment tracking, model registry, and SageMaker-based training & deployment, provisioned using AWS CDK Infrastructure as Code.

Designing and implementing production-grade MLOps systems that span the complete machine learning lifecycle—from data versioning and feature stores to training pipelines, model deployment, and operational monitoring. These systems demonstrate scalable, reproducible AI infrastructure across multiple cloud platforms.

Three pillar architecture covering the complete ML lifecycle: Training Pipelines, Deployment & Operations, and Data & Feature Systems.

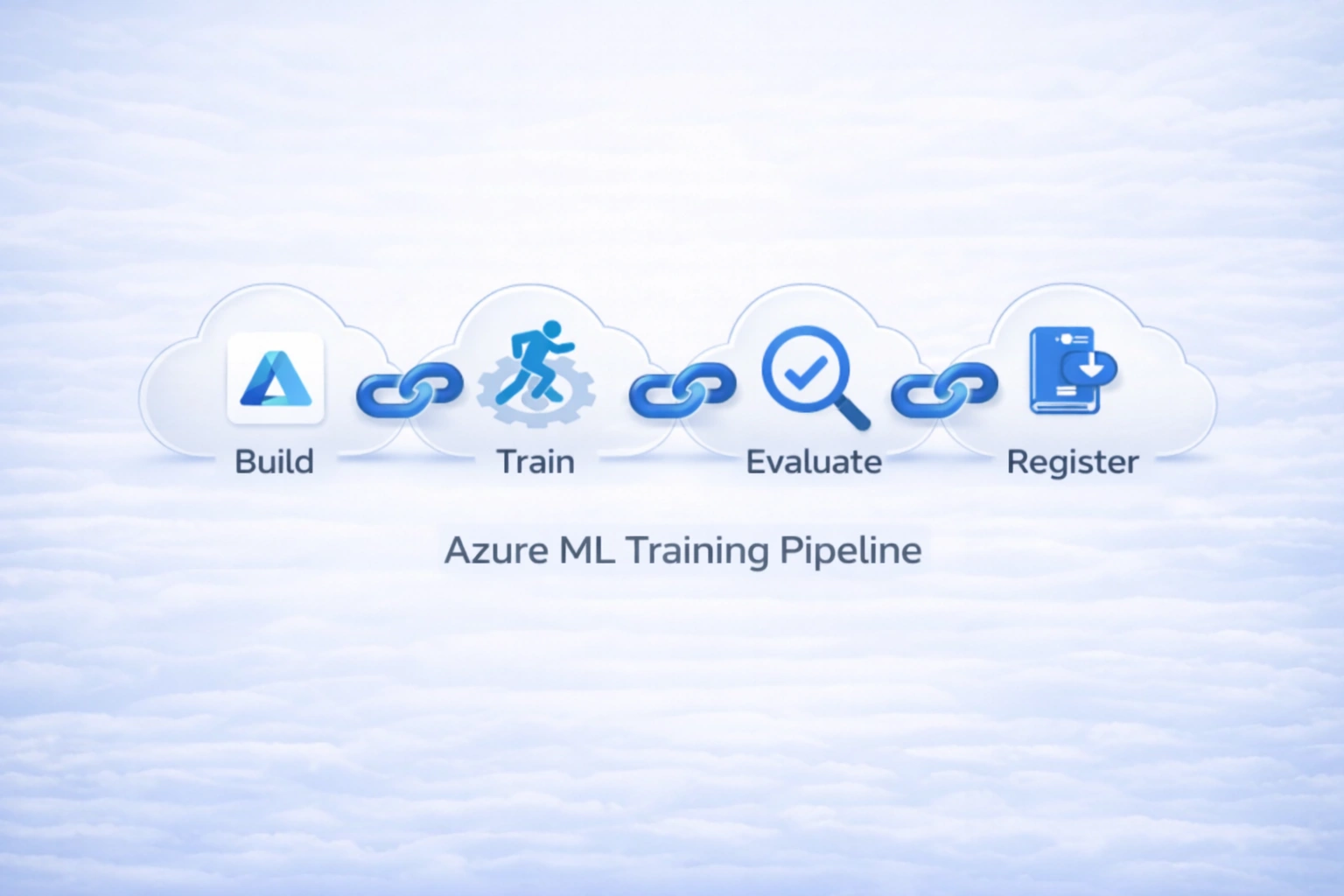

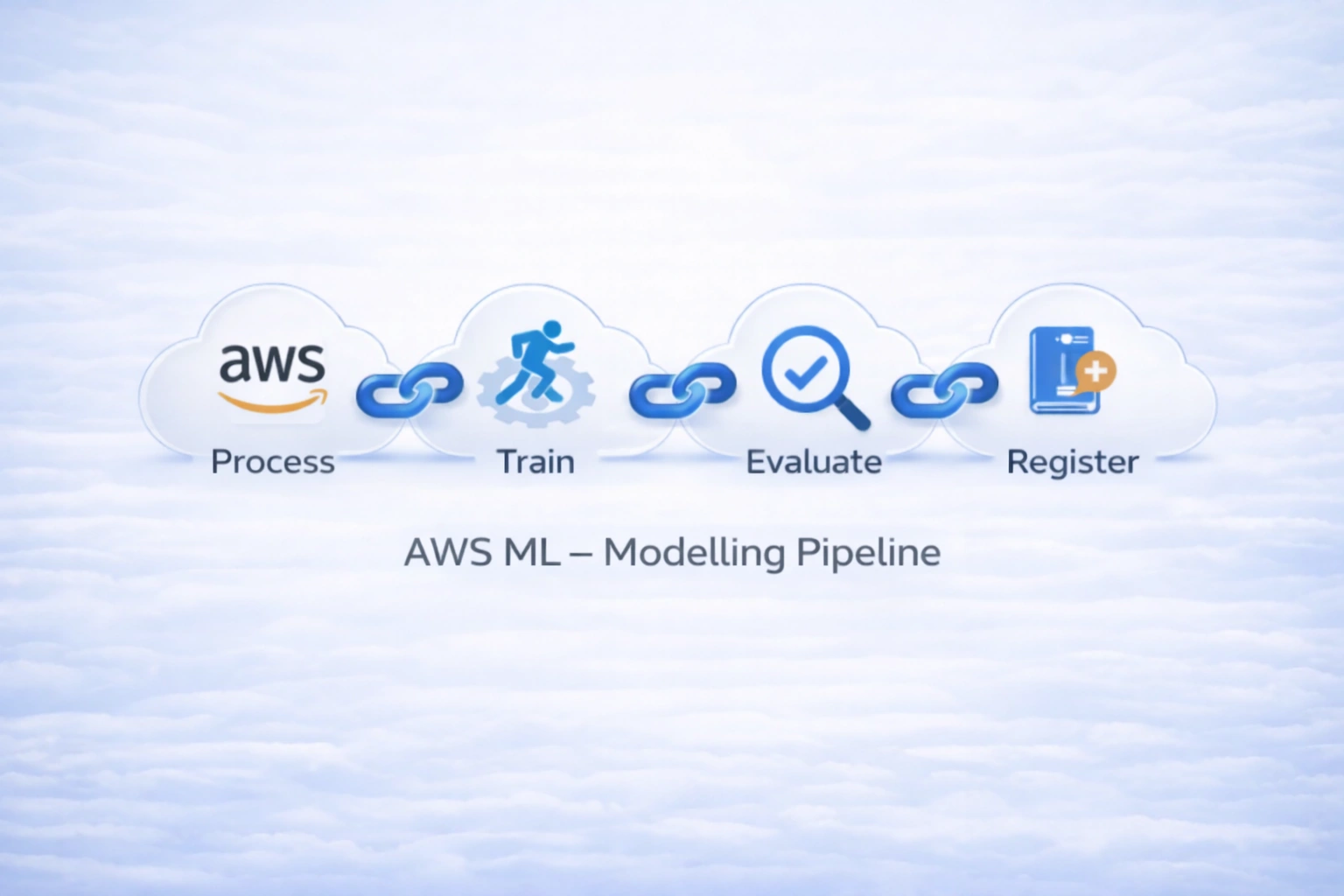

End-to-end ML platform implementations for automated model building, training, evaluation, and registration

Production-grade ML platform with experiment tracking, model registry, and SageMaker-based training & deployment, provisioned using AWS CDK Infrastructure as Code.

Production-grade Azure ML pipeline that automates model building, training, evaluation, and governed registration using CI/CD-driven orchestration with experiment tracking and promotion gates.

Fully automated ML training pipeline on AWS: data preprocessing, XGBoost training, evaluation with conditional quality gate (MSE), and governed model registration in SageMaker Model Registry. Triggered via GitHub Actions + OIDC.

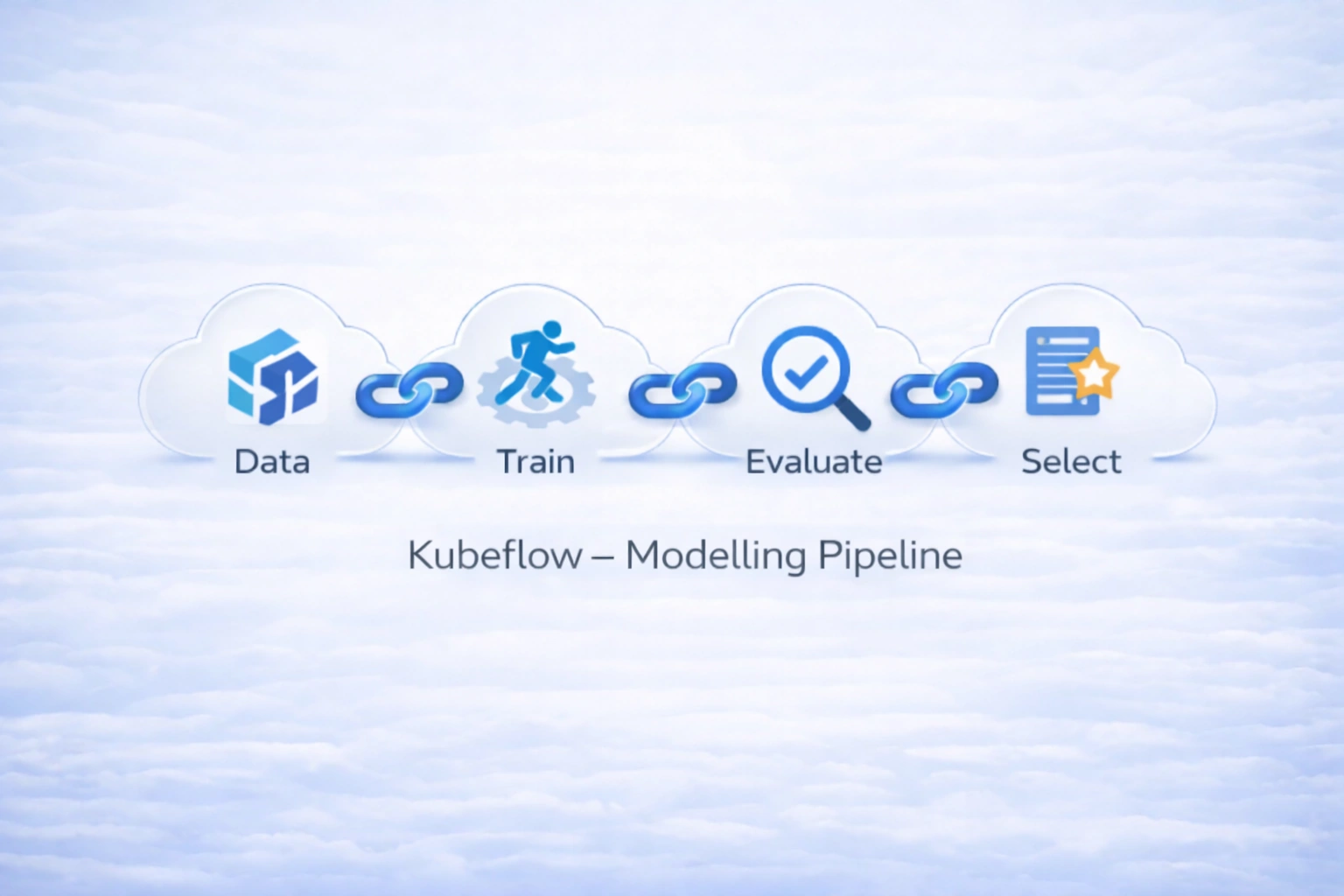

Production‑grade ML training, evaluation, gating, and conditional registration pipeline on Google Vertex AI using Kubeflow Pipelines (KFP v2). Enforces model quality, tracks lineage, and registers only validated models in Vertex AI Model Registry.

Production‑style ML training and evaluation pipelines using Kubeflow Pipelines (KFP v2) with containerized components, artifact lineage, and metric‑driven model selection across multiple algorithms (LR, DT) for optimal model governance.

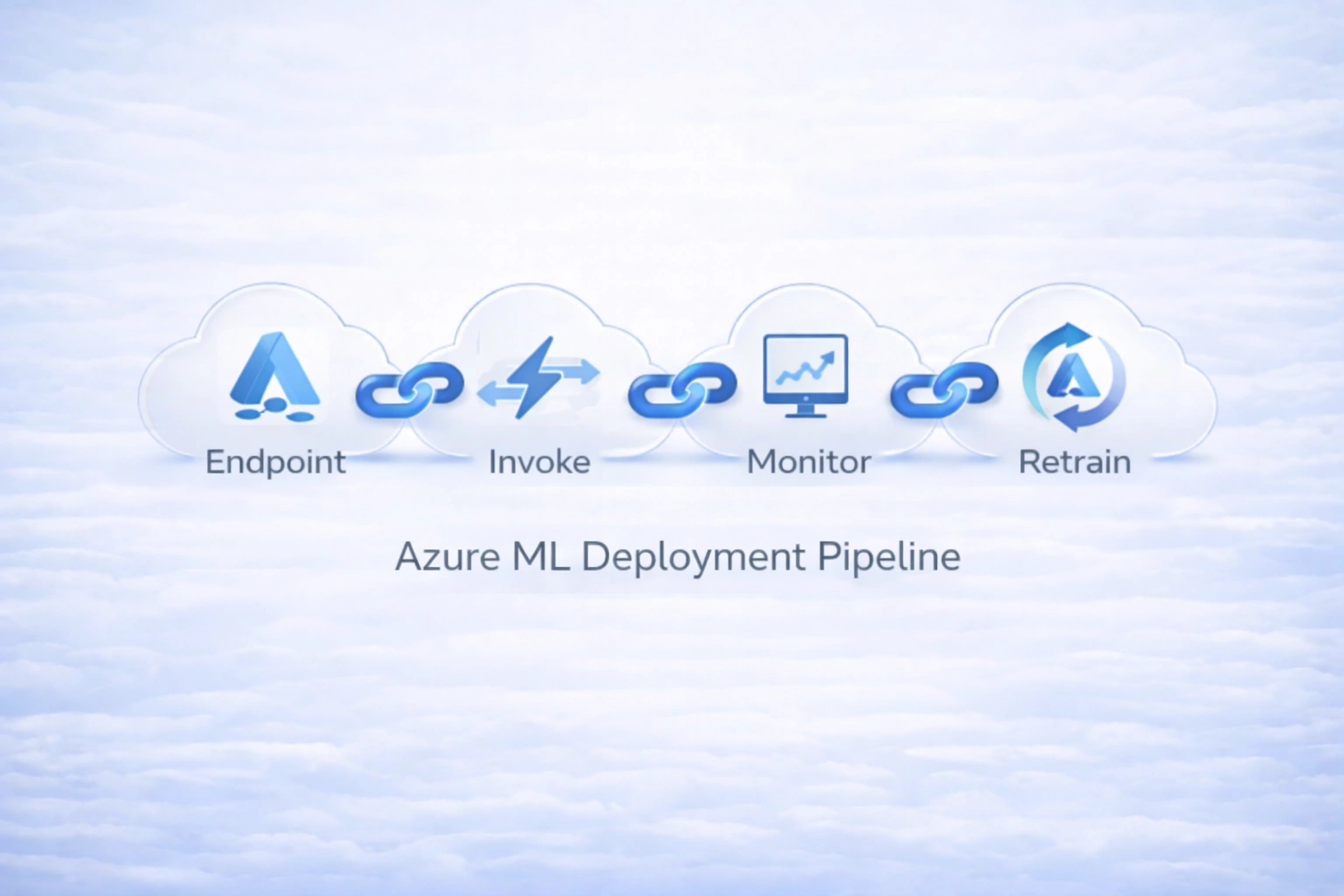

Production-grade model deployment pipelines, serving infrastructure, and operational monitoring systems

Production-grade Azure ML deployment pipeline that automates endpoint creation, model serving, traffic routing, validation, monitoring, and retraining orchestration using CI/CD.

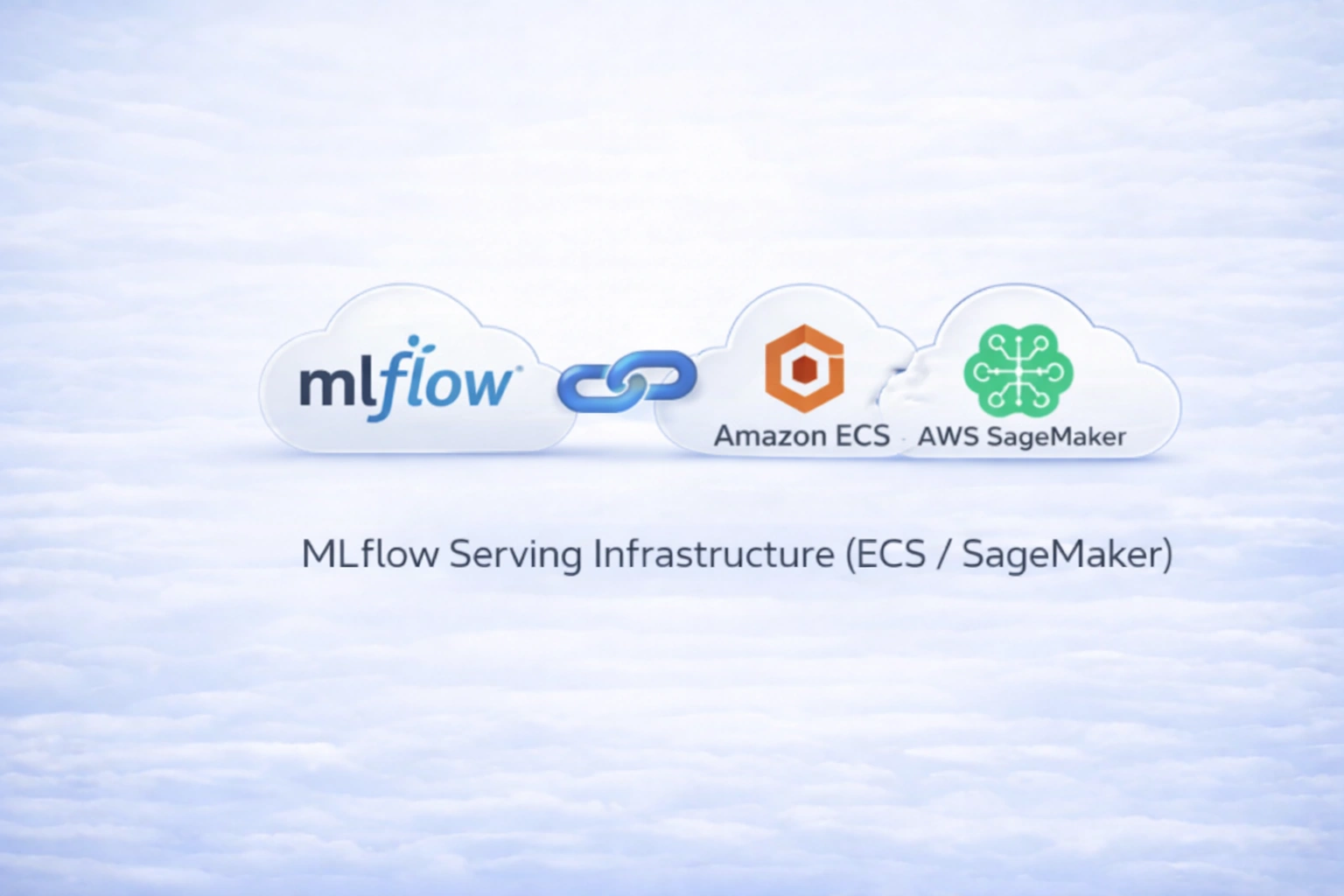

Custom MLflow inference containers and SageMaker endpoint deployment for production model serving with custom Docker images, ECR integration, and real-time inference capabilities.

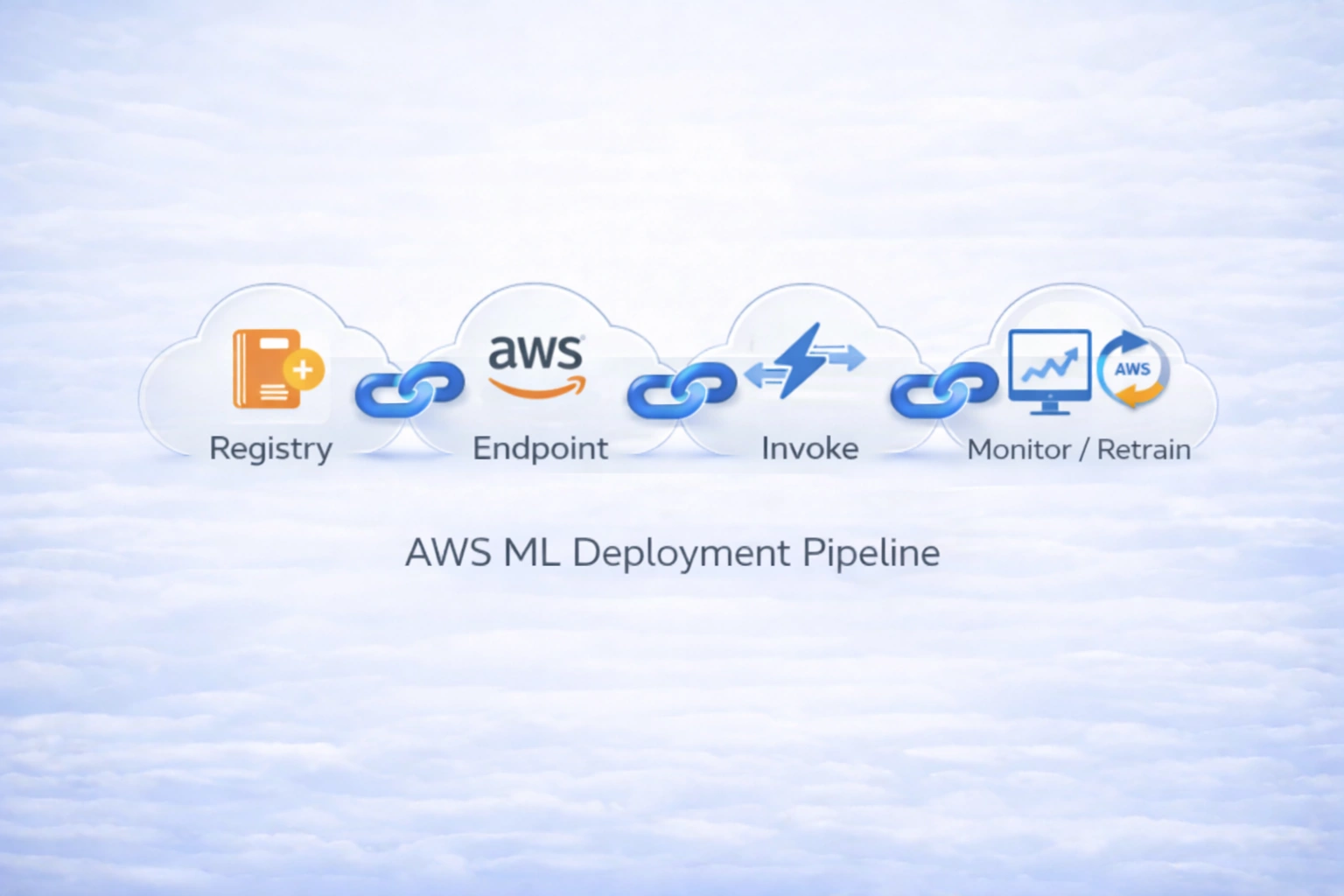

Automated promotion of approved models from SageMaker Model Registry to real‑time endpoints using AWS CDK and CI/CD (GitHub Actions + OIDC). Multi‑environment (dev, pre‑prod, prod) with least‑privilege IAM, KMS encryption, and integrated monitoring for retraining triggers.

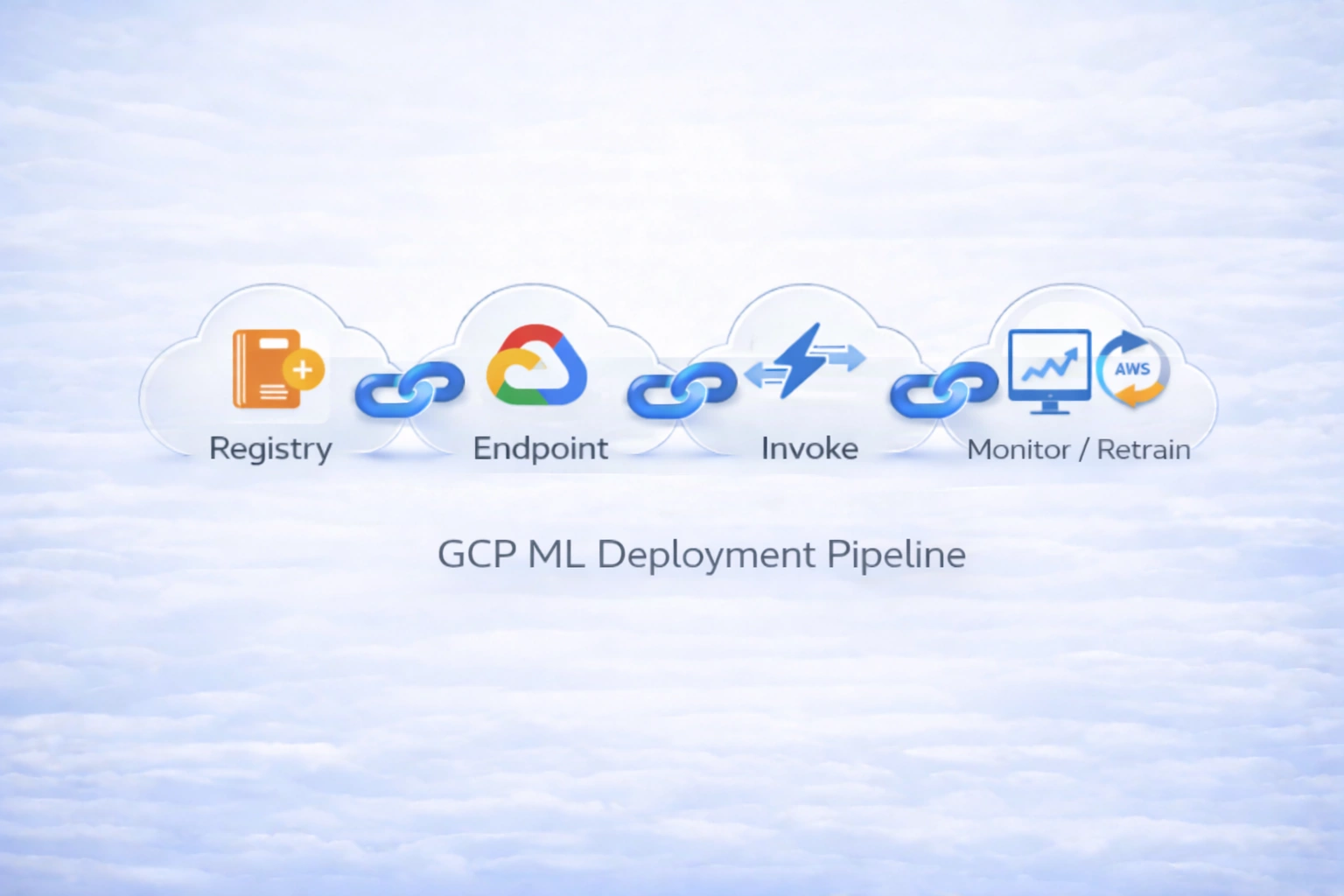

Production‑grade model deployment pipeline on Google Cloud with Vertex AI Endpoints, traffic splitting (blue/green, canary), and scheduled retraining. Automates model promotion from Vertex AI Model Registry to managed online inference endpoints with integrated monitoring and continuous refresh loops.

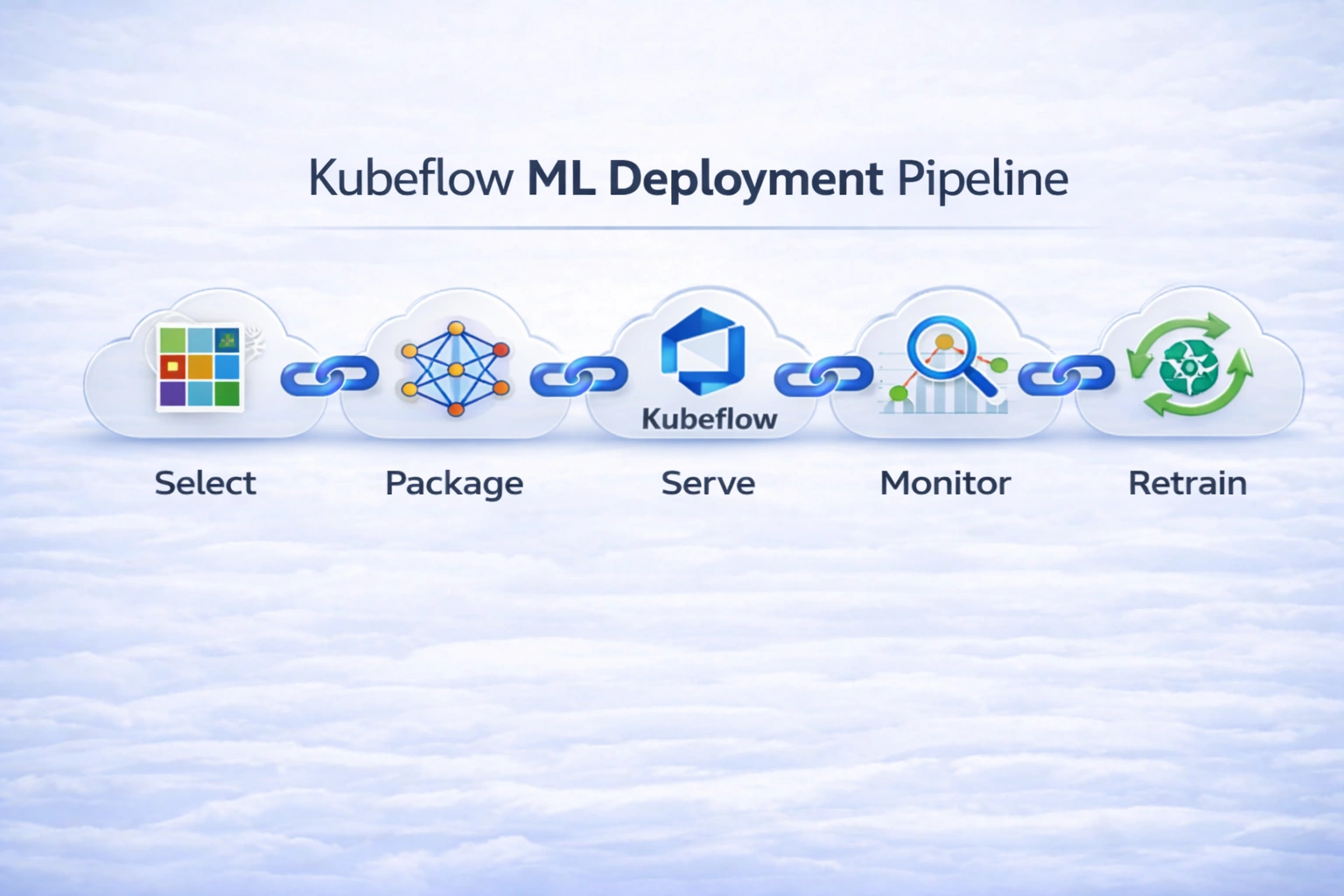

Production‑grade model deployment pipeline on Kubernetes with Kubeflow, KServe, and integrated monitoring. Automates metric‑gated model promotion from training pipelines to containerized inference services with traffic splitting (rolling updates, canary) and continuous retraining loops.

Data versioning, feature store implementations, and reproducible data pipelines for ML systems

Git-like version control for datasets and ML artifacts with cloud storage integration (S3/Azure Blob), pipeline tracking, and reproducible ML workflows across training and experimentation cycles.

Production-grade feature store implementation for consistent feature engineering across training and serving, with unified feature repository, online/offline stores, and feature registry capabilities.