MLflow on AWS

CDK IaC + SageMaker Training & Deployment | MLOps Platform

Production-grade ML platform using AWS CDK to provision MLflow (ECS Fargate) and SageMaker for train → register → deploy lifecycle. Building end-to-end MLOps infrastructure with Infrastructure as Code approach.

Project Summary

Comprehensive MLOps Platform Overview

Project Category

MLOps / Cloud / DevOps

Industry/Domain

Cross-industry (Enterprise AI Platform / SaaS / Consulting-ready)

MLOps Focus

Machine Learning Platform Engineering / MLOps Infrastructure

Key Technologies & Concepts

Core MLOps Technologies Used

MLOps Platform Keywords

Problem & Objective

What problem did this project solve?

Problems Solved

- No unified, production-grade platform to manage ML experiments, models, and deployments across cloud infrastructure

- Lack of centralized experiment tracking and model versioning

- Manual and error-prone ML workflow management

Primary Objectives

- Create a production-grade ML platform using AWS CDK (Infrastructure as Code)

- Provision MLflow on ECS Fargate for experiment tracking and model registry

- Integrate SageMaker for training and real-time deployment

- Establish full ML lifecycle: Train → Track → Register → Deploy

Solution & Architecture

Architectural Overview

Solution Overview

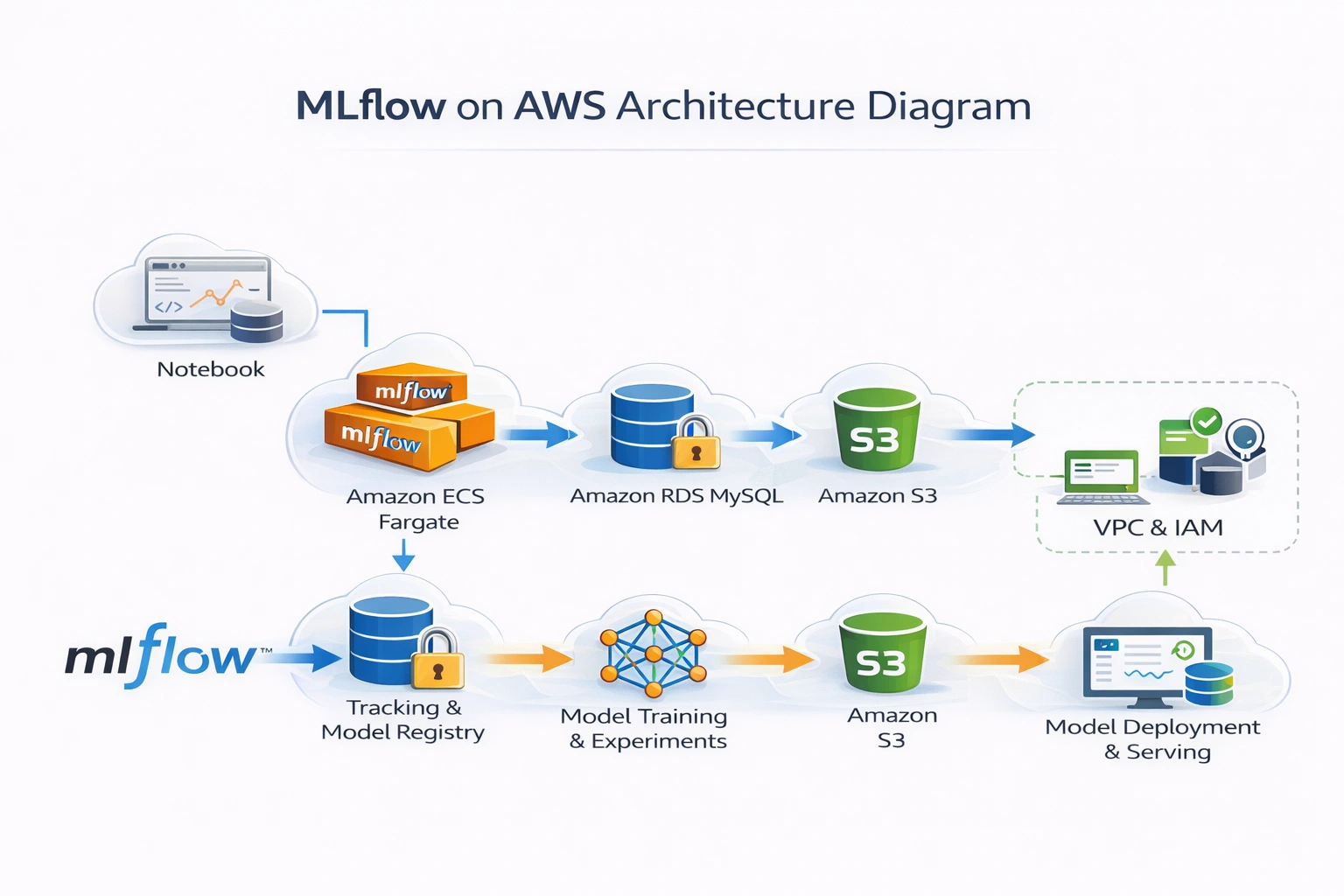

Provisioned MLflow on ECS Fargate using AWS CDK, integrated SageMaker for training and real-time deployment, with S3 for artifacts and RDS for metadata — forming a full ML lifecycle platform.

MLflow provides centralized experiment tracking and model registry while SageMaker offers managed training and inference, creating a comprehensive MLOps platform on AWS.

This solution enables reproducible ML experiments, versioned model management, and automated deployment workflows with full lineage tracking and governance.

Key Components

- AWS CDK (IaC): Infrastructure as Code deployment

- ECS Fargate: MLflow Tracking Server hosting

- Application Load Balancer: MLflow UI access

- Amazon RDS (MySQL): MLflow backend store

- Amazon S3: Artifact storage for models and experiments

- Amazon ECR: MLflow inference container registry

- Amazon SageMaker: Training jobs + real-time endpoints

- IAM Roles & Secrets Manager: Secure access management

Scalability & Reliability Considerations

- Stateless MLflow server on Fargate for horizontal scalability

- Externalized state management (S3 + RDS)

- Managed training & inference via SageMaker

- Load-balanced MLflow UI for high availability

- Infrastructure reproducible via CDK for disaster recovery

AI / DevOps Details

MLOps Platform Engineering Implementation

AI/ML Type & DevOps Focus

- AI/ML Type: MLOps Platform Engineering

- DevOps Focus: Infrastructure as Code, CI/CD for ML

- Models/Pipelines: ML pipelines using Pipeline as Code

Models, Pipeline & Automation

- MLflow-based experiment tracking

- SageMaker training pipelines

- Model registry → deployment automation

- Infrastructure as Code for ML platform

CI/CD, Containerization & Orchestration

- Docker: MLflow inference container

- Amazon ECR: Container registry

- ECS Fargate: Container orchestration

- AWS CDK: Infrastructure deployment

Monitoring, Logging & Optimization

- MLflow experiment metrics tracking

- SageMaker endpoint monitoring

- Cost-aware endpoint deletion

- Centralized model lineage

Skills & Technologies Used

Technical Proficiency Demonstrated

Primary Skills

- MLOps Platform Engineering (Advanced)

- AWS Cloud Architecture (Advanced)

- Infrastructure as Code - CDK (Advanced)

Secondary Tools / Frameworks

- MLflow (Experiment tracking & model registry)

- SageMaker SDK

- Boto3 (AWS Python SDK)

- Docker (Containerization)

Programming Languages

- Python: Primary language for CDK, training scripts, and automation

- YAML/JSON: Configuration files and CloudFormation templates

AWS Cloud & DevOps Tools

Implementation Details

Step-by-Step Implementation Process

Stage 1: CDK Toolkit Deployment

- Step 1: Activate Python virtual environment

- Step 2: Install required libraries:

pip install -r requirements.txt - Step 3: Bootstrap CDK:

cdk bootstrap(creates CDK toolkit on CloudFormation) - Step 4: Synthesize CloudFormation template:

cdk synth - Step 5: Deploy stack:

cdk deploy --parameters ProjectName=mflow1 --require-approval never

source .venv/bin/activate

# Install dependencies

pip install -r requirements.txt

# Bootstrap CDK

cdk bootstrap

# Deploy MLflow stack

cdk deploy --parameters ProjectName=mflow1 --require-approval never

Stage 2: MLflow Components Setup

Four Components of MLflow:

- Tracking: API and UI for logging model information (parameters, code versions, metrics)

- Projects: Tool for organizing and managing ML projects (reusable and reproducible)

- Models: Tool for packaging and deploying machine learning models

- Model Registry: Tool for managing the lifecycle of MLflow models (versioning, storage, lineage)

MLflow deployed on AWS Fargate (serverless container hosting) with externalized state management using S3 for artifacts and RDS MySQL for metadata storage.

Stage 3: SageMaker Training & Deployment

Amazon SageMaker Components:

- Notebook Instances: Jupyter notebooks for exploration and analysis

- Algorithms: Built-in algorithms (XGBoost, Linear Learner, KNN, etc.)

- ML Training Service: Managed training and hyperparameter tuning

- ML Hosting Services: Real-time inference endpoints

Training Pipeline:

- Prepare training data and upload to S3

- Configure SageMaker SKLearn estimator with hyperparameters

- Set MLflow tracking URI to remote ECS-hosted MLflow server

- Launch training job with S3 data paths

- Log parameters, metrics, and model artifacts to MLflow

Stage 4: MLflow Inference Container & Deployment

Docker Image for Inference:

FROM python:3.10.12

RUN pip install \

mlflow==2.15.1 \

pymysql==1.0.2 \

boto3 && \

mkdir /mlflow/

EXPOSE 5000

CMD mlflow server \

--host 0.0.0.0 \

--port 5000 \

--default-artifact-root $(BUCKET) \

--backend-store-uri mysql+pymysql://$(USERNAME):$(PASSWORD)@$(HOST):$(PORT)/$(DATABASE)

Deployment Steps:

- Build and push MLflow Docker image to ECR

- Configure SageMaker endpoint with MLflow model URI

- Deploy model to SageMaker real-time endpoint

- Test inference with sample payload

Challenges & Outcomes

Technical Challenges and Resolutions

Key Technical Challenges

- Wiring SageMaker training jobs to remote MLflow server

- Building custom MLflow inference containers

- Designing stateful ML platform on stateless compute (Fargate)

- Managing secure credentials (DB + S3 + ECS roles)

How They Were Resolved

- SageMaker to MLflow: Configured MLFLOW_TRACKING_URI in training scripts to point to ECS-hosted MLflow ALB endpoint

- Custom containers: Built and versioned MLflow-serving Docker images, pushed to ECR

- State management: Externalized state using RDS for backend store and S3 for artifacts

- Credentials: Stored secrets in AWS Secrets Manager, injected via IAM roles

Monitoring & Cost Management

- MLflow experiment metrics for performance tracking

- SageMaker endpoint monitoring for latency and errors

- Cost-aware endpoint deletion to avoid unnecessary charges

- Centralized model lineage for audit and compliance

Monitoring in MLOps involves observing model performance, health, behavior, and infrastructure to ensure models perform as expected and identify issues early.

AWS CDK Commands Reference

Essential CDK Commands for ML Platform Deployment

| Command | Description | Purpose |

|---|---|---|

npm install -g aws-cdk |

Install AWS CDK CLI globally | Tool installation |

cdk --version |

Verify CDK installation | Version check |

cdk init app --language python |

Create CDK app with Python | Project initialization |

aws configure |

Configure AWS credentials | AWS authentication |

pip install -r requirements.txt |

Install Python dependencies | Dependency management |

source .venv/bin/activate |

Activate Python virtual environment | Environment setup |

cdk bootstrap |

Bootstrap CDK toolkit | One-time setup |

cdk synth |

Synthesize CloudFormation template | Template generation |

cdk diff |

Compare deployed stack with current state | Change detection |

cdk deploy |

Deploy stack to AWS | Infrastructure deployment |

Why npm (Node.js) for Python CDK? AWS CDK is built on Node.js runtime, while Python is used for writing infrastructure code. Node.js + npm installs the CDK CLI tool.

Assets & References

Code, diagrams, study material

GitHub Repository

Complete source code repository containing AWS CDK IaC, MLflow configuration, and SageMaker integration.

Access Repository