SageMaker Modelling Pipeline

Train · Evaluate · Register (MLOps quality gates)

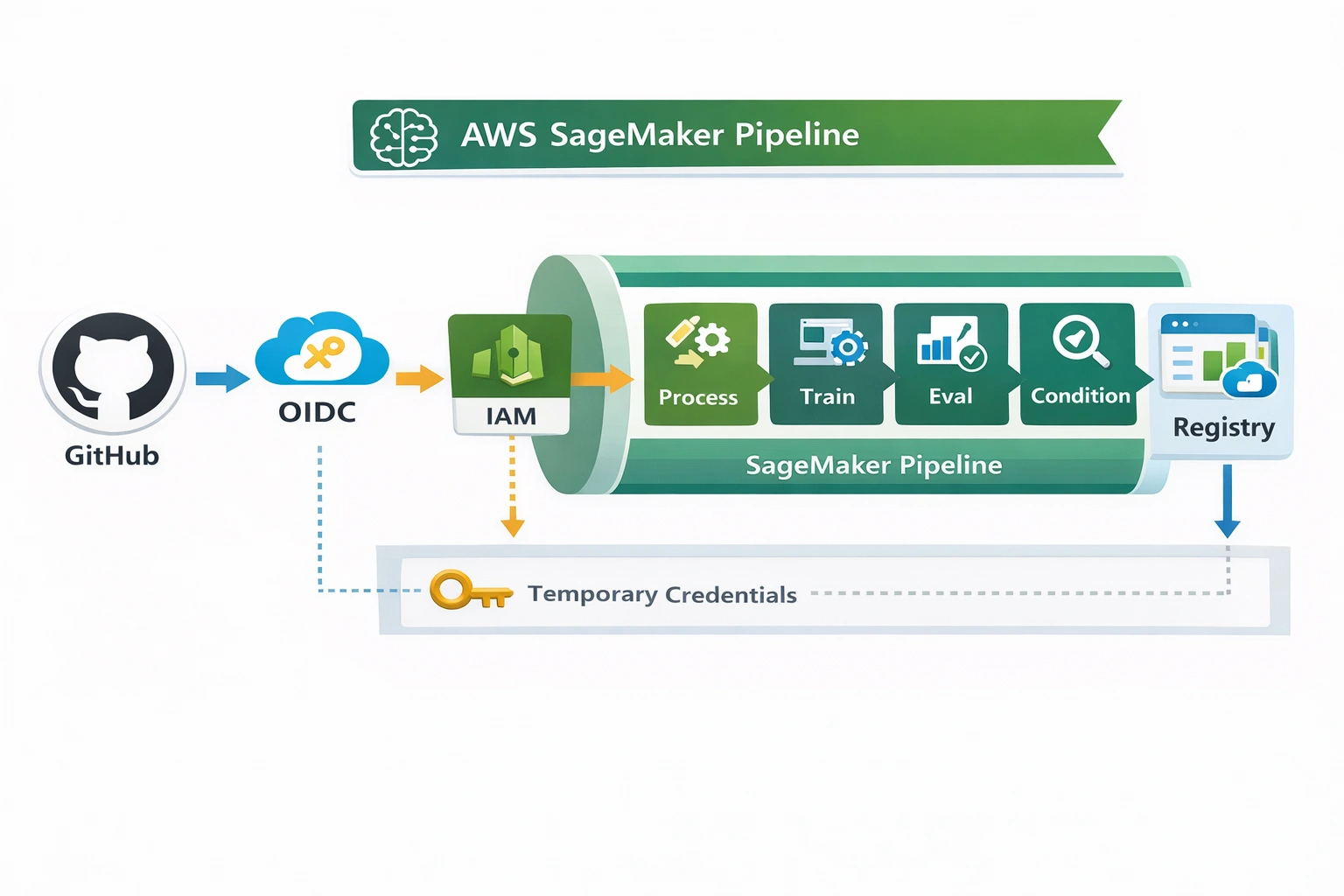

Fully automated ML training pipeline on AWS: data preprocessing, XGBoost training, evaluation with conditional quality gate (MSE), and governed model registration in SageMaker Model Registry. Triggered via GitHub Actions + OIDC.

Project Summary

MLOps · Pipeline-2 (Train/Evaluate/Register)

AI/ML type

Supervised regression (XGBoost) + automated MLOps

Domain

AWS SageMaker · Model building & registration

DevOps focus

CI/CD for ML (GitHub Actions + OIDC + SageMaker Pipelines)

Key technologies

AWS + CI/CD + containers

Problem & Objective

Why this pipeline?

Problems solved

- Manual training → non‑reproducible experiments, inconsistent evaluation

- No quality gates → bad models could reach production

- Lack of governance & traceability

Primary objectives

- Fully automated train/eval/register pipeline on AWS

- Conditional registration based on MSE threshold

- Integrate CI/CD with GitHub Actions + OIDC (no static secrets)

Solution & Architecture

SageMaker Pipeline · quality gate

Overview

SageMaker Pipeline with steps: Process → Train → Evaluate → Conditional Register. Triggered via GitHub Actions (Linux runner) that assumes IAM role through OIDC. Evaluation metrics (MSE) are written to JSON; only models that pass threshold are registered in SageMaker Model Registry with approval workflow enabled. Artifacts stored in S3, images in ECR.

Skills & Technologies

ML engineering + AWS

Primary skills

- Amazon SageMaker Pipelines (advanced)

- MLOps: training/evaluation/registry (advanced)

- CI/CD with GitHub Actions + OIDC (advanced)

- Docker, ECR, IAM, CloudWatch

Languages & tools

- Python (SDK, processing/training scripts)

- YAML (GitHub workflows, pipeline config)

- XGBoost / scikit-learn

Pipeline execution & governance

conditional registration + approvals

Execution

- Trigger: push to main or workflow_dispatch

- GitHub runner: ubuntu-latest, assumes IAM role via OIDC

- SageMaker Pipeline execution: processing (prep), training (XGBoost), evaluation (MSE to JSON)

- Conditional step: if metrics pass → register model (status pending/manual approval)

Governance & controls

- Quality gate on MSE – auto‑reject underperforming models

- Model Registry approval workflow (manual approval step before production)

- Least‑privilege IAM roles + KMS encryption for S3

- CloudWatch logs for every job (traceability)

AWS CI/CD · YAML mapping

GitHub Actions → SageMaker Pipeline

| Architecture block | AWS / YAML construct |

|---|---|

| Source repository | GitHub (ml-pipeline repo) |

| CI trigger | on: [push, workflow_dispatch] |

| Runner | ubuntu-latest + aws-actions/configure-aws-credentials (OIDC) |

| Orchestration | SageMaker Pipeline (Process→Train→Evaluate→Condition) |

| Training | SageMaker Training Job (XGBoost container) |

| Processing | SageMaker Processing (sklearn) |

| Artifact storage | S3 (datasets, model.tar.gz, metrics.json) |

| Container registry | Amazon ECR (custom images) |

| Model registry | SageMaker Model Registry + manual approval |

| Auth | OIDC (GitHub OIDC provider) → IAM role |

| Logs | CloudWatch Logs |

YAML steps: checkout, setup-python, pip install, run-pipeline (create/start), wait, publish status.

Assets & references

Code, diagrams, study material

Repository

MLOps CDK repo: reference implementation for GitHub Actions and SageMaker pipeline orchestration.

github.com/03sarah/...