Vertex AI Training & Evaluation Pipeline

Kubeflow Pipelines v2 · Production‑grade MLOps on GCP

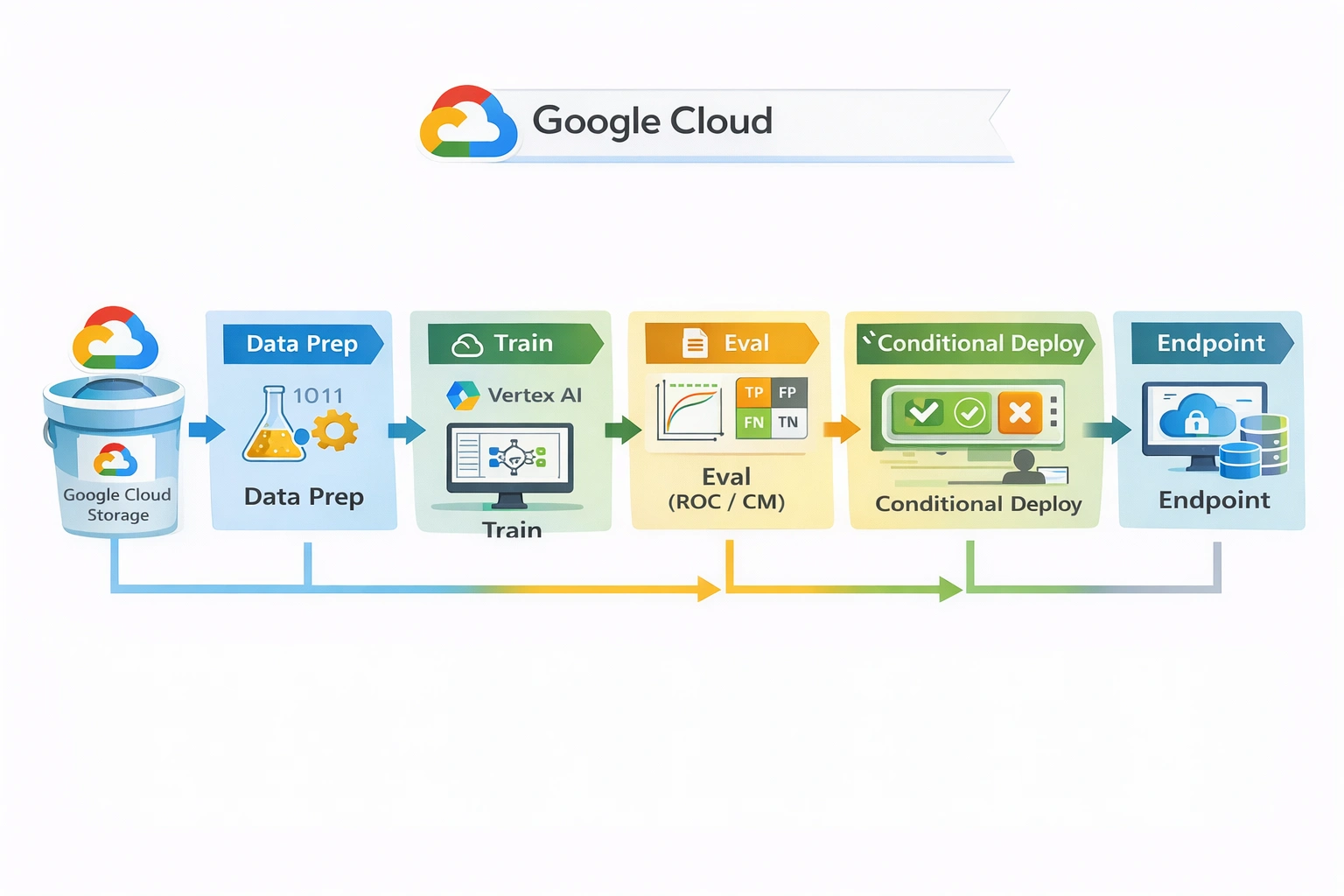

Production‑grade ML training, evaluation, gating, and conditional deployment pipeline on Google Vertex AI using Kubeflow Pipelines (KFP v2). Enforces model quality, tracks lineage, and deploys only validated models.

Project Summary

AI + MLOps + Cloud Platform Engineering

Category

AI/ML · MLOps · Platform Engineering

Industry

Cross‑industry Enterprise AI Platform

MLOps Focus

Training · Evaluation · Gating · Conditional Deploy

Key Technologies & Concepts

ML/AI platform primitives

Problem & Objective

Why this pipeline exists

Problem

Manual, notebook‑driven ML workflows lack reproducibility, governance, automated evaluation gates, and production discipline. No structured way to enforce model quality before deployment in GCP.

Objective

Build a production‑grade, automated ML training/evaluation pipeline on GCP that enforces quality gates, tracks lineage, and conditionally deploys models to Vertex AI endpoints using native MLOps primitives.

Solution & Architecture

Vertex AI native orchestration

Overview

Vertex AI Pipelines (KFP v2) orchestrates data preparation, model training (RandomForest), evaluation (ROC, confusion matrix, accuracy), quality gating, conditional deployment to Vertex AI Endpoints, and scheduled retraining.

Skills & Technologies

ML/platform engineering stack

Primary (Advanced)

- MLOps Architecture

- Vertex AI Pipelines / KFP v2

- Cloud AI Platform Engineering

- Production ML Workflow Design

Secondary

- Kubeflow Pipelines SDK

- scikit‑learn · Vertex AI SDK

- GCS · IAM · Workload Identity

- GitHub Actions (CI trigger)

Languages & DevOps

Pipeline Execution & Governance

Conditional gates, lineage, scheduling

Execution

- Manual / CI trigger → Vertex AI Pipeline run

- KFP v2 components: data prep, training, evaluation, deploy

- Artifacts stored in GCS, metrics in Vertex AI Metadata

Governance

- Explicit evaluation gate (accuracy/ROC threshold)

- Conditional pipeline branch: deploy only if gate passes

- Model versioning in Vertex AI Model Registry

- IAM least‑privilege + Workload Identity Federation

Challenges & Resolutions

Wiring KFP v2 components → Vertex Pipelines: used native KFP interfaces.

ROC/metrics logging: sanitized inputs for Vertex metrics APIs.

Conditional gates: pipeline condition with threshold check.

Model format for serving: packaged as Vertex‑compatible artifact.

Notebook to production: refactored into pipeline components.

GCP CI/CD · Architecture & Mapping

MLOps constructs to KFP/Vertex

| Architecture Block | GCP / KFP v2 Construct |

|---|---|

| Source Repository | GitHub (vertex-ai-mlops-kfp2) |

| Source Trigger | Manual / GitHub Actions (CI) |

| CI Runner | ubuntu‑latest (optional) |

| Pipeline Orchestration | Vertex AI Pipelines (KFP v2) |

| Data Processing | Python component (Pandas + sklearn) |

| Training | Vertex AI Training (custom job) |

| Evaluation | Python component (ROC, CM, accuracy) |

| Quality Gate | Conditional + Vertex AI Metadata check |

| Model Upload | Vertex AI Model Registry |

| Deployment | Vertex AI Endpoint (conditional) |

| Artifact Store | GCS · Metadata Store |

Assets & References

Code, diagrams, study material

Repository

vertex-ai-mlops-kfp2: full pipeline code, components, deployment specs.

github.com/Rajesh-Arigala/…Study Material Resources

Official docs, restricted KFP guides, Colab notebooks

Request Study Material