MLFlow

ML Lifecycle Management & MLOps

Open-source platform for managing the complete machine learning lifecycle, from experimentation to deployment. This includes configuring MLFlow tracking servers on AWS ECS-Fargate, enabling experiment tracking across cloud platforms, managing model versions, and building reproducible machine learning workflows aligned with MLOps best practices.

Projects Showcase

Production-grade MLFlow implementations for enterprise machine learning lifecycle management

AWS

AWS

MLFlow on AWS ECS-Fargate

MLFlow tracking server deployment on AWS ECS Fargate with complete infrastructure automation and scalable backend configuration for enterprise MLOps.

- MLFlow tracking server installed on AWS ECS-Fargate as a scalable service

- Track experiments with multiple runs, storing params, metrics, and artifacts

- Model registry for version control and management

- Model-URI based predictions and model version updates

- Integration with SageMaker AI for end-to-end ML workflows

- Comprehensive logging and monitoring with latency and error rate tracking

SageMaker

SageMaker

MLFlow Labs with AWS SageMaker

Complete MLOps workflow using AWS SageMaker AI with MLFlow for experiment tracking, model training, and deployment to real-time endpoints.

- Data Preparation (DP), Model Training (MT), Model Evaluation (ME), Model Deployment (MD)

- Build, Train, Deploy machine learning models on AWS SageMaker AI

- Deploy models to real-time endpoints with auto-scaling and low latency

- Training and hyperparameter tuning (.tar files management)

- ML hosting services with auto-scaling capabilities

- IAM role configuration for Notebook instances with S3 bucket access

- Custom permissions for ECR and S3 full access

- Jupyter Labs environment setup with Git integration

- Experiment execution and registration on MLFlow server (running on ECS-Fargate)

- Training code image pulled from EC2-Container Registry

- Prediction code with model_uri integration for endpoint deployment

- Model registry updates based on business requirements

- Comprehensive logging and monitoring: latency (ms), error rates, etc.

MLOps

MLOps

MLOps Ecosystem Projects

Complementary projects demonstrating the complete MLOps ecosystem with containerization, orchestration, and CI/CD pipelines.

- AWS CDK for MLFlow Infrastructure: Programmatic infrastructure deployment using CDK Toolkit

- Docker for ML Models: Containerization of ML applications with Docker and Docker Compose

- Kubernetes for ML Deployment: Orchestration of ML models on Kubernetes clusters

- CI/CD Pipelines: Automated ML pipeline deployment with GitHub Actions and AWS CodePipeline

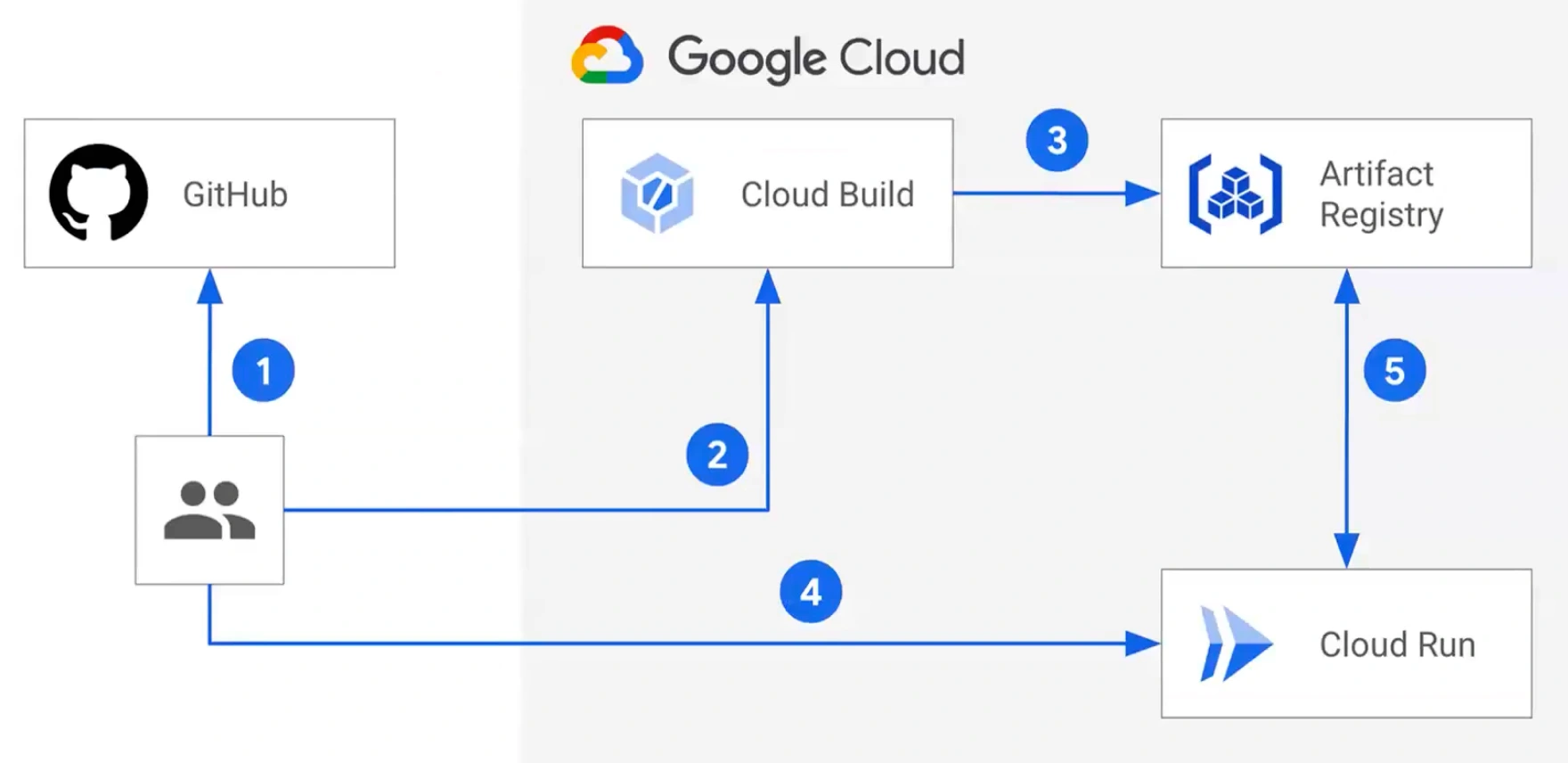

- Multi-Cloud MLOps: MLFlow deployments across AWS, Azure, and GCP platforms

- Experiment tracking across multiple runs and parameters

- Model versioning and lifecycle management

- Artifact storage and management (S3 integration)

- Model registry with staging/production workflows

- Integration with major ML frameworks (TensorFlow, PyTorch, Scikit-learn)

- Multi-user collaboration and access control

- Automated model deployment and monitoring