Azure ML Platform Infrastructure as Code

Production-grade End-to-end Azure ML platform automation with IaC

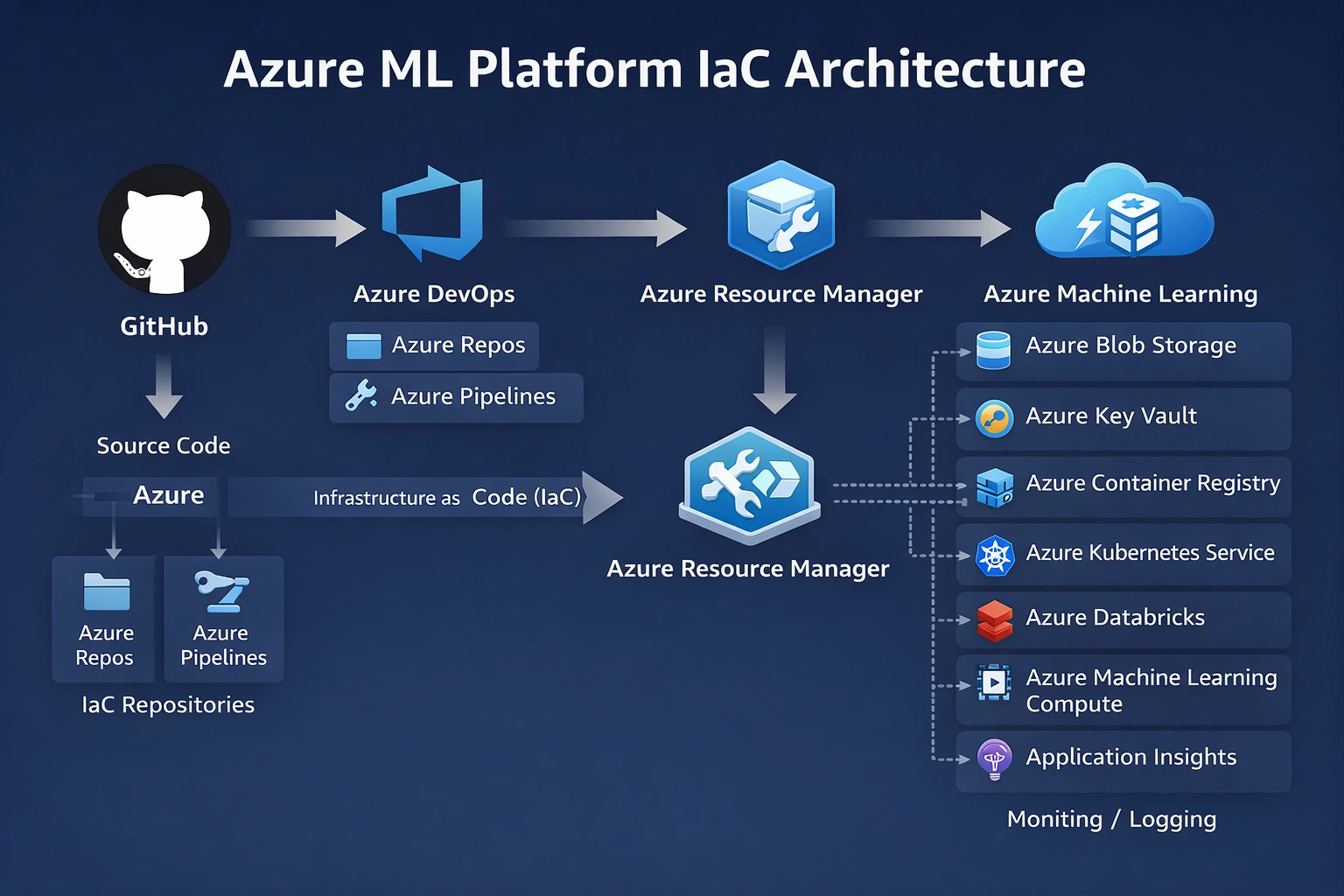

Production-grade End-to-end Azure ML platform infrastructure automation provisioned using Infrastructure as Code with secure RBAC and autoscaling compute, using Azure DevOps CI/CD and Azure CLI v2. A fully automated, reproducible Azure ML platform with standardized workspace setup, secure RBAC, and cost-aware compute provisioning.

Project Summary

Comprehensive Project Overview

Project Category

Cloud + MLOps (Infrastructure as Code for AI Platforms)

Industry/Domain

Cross-Industry (Enterprise AI Platforms / MLOps Infrastructure)

AI/ML/DevOps Focus

AI Platform Engineering / MLOps Infrastructure

Key Technologies & Concepts

Core Technologies Used

Azure ML Platform IaC Keywords

Problem & Objective

What problem did this project solve?

Problems Solved

- Teams struggle to set up Azure ML environments reliably and repeatedly

- Infrastructure created manually leads to inconsistency across environments

- Poor governance, weak access control, and no cost discipline

- Results in broken ML pipelines, environment drift, security risks

- Slow onboarding of new AI projects due to manual cloud setup

Primary Objectives

- Create a reproducible, secure, and cost-governed Azure ML platform foundation

- Provision on demand via CI/CD pipelines

- Enable consistent environments for ML training and deployment

- Support multiple environments (Dev/Prod) without manual cloud setup

- Allow ML teams to focus on models instead of cloud plumbing

Solution & Architecture

Architectural Overview

Solution Overview

Designed and implemented a CI/CD-driven Infrastructure-as-Code pipeline that provisions and configures the complete Azure Machine Learning platform—resource groups, AML workspace, CLI context, RBAC, and autoscaling compute—using Azure DevOps YAML and Azure CLI v2.

The solution creates a standardized, re-runnable cloud ML foundation that downstream training and deployment pipelines can reliably build upon.

The platform is designed to scale compute elastically using Azure ML autoscaling clusters (min=0, max=N) to handle variable training loads while controlling cost. Infrastructure pipelines are idempotent and re-runnable, ensuring reliable provisioning across environments without drift.

Key Components

- Cloud Platform: Microsoft Azure (Azure Machine Learning, Azure DevOps, Azure Resource Manager)

- Services: Azure DevOps (Repos, YAML Pipelines), Azure Machine Learning (Workspace, Compute Clusters, Model Registry)

- Tools: Azure CLI v2, Azure ML CLI v2, Service Principals & RBAC (Contributor role)

- Storage & Monitoring: Azure Storage (Workspace-backed), Azure Monitor / Application Insights

AI/ML & DevOps Details

Technical Implementation Details

AI/ML Type or DevOps Focus

DevOps / MLOps Platform Engineering (Infrastructure as Code for AI Platforms)

Models, Pipeline, or Automation

CI/CD Infrastructure pipelines (Pipeline-as-Code) for automated Azure ML platform provisioning and configuration

CI/CD & Containerization Tools

Azure DevOps (YAML Pipelines), Azure CLI v2, Azure ML CLI v2

Monitoring & Optimization

Pipeline execution logging (Azure DevOps), Azure ML workspace telemetry, idempotent infra checks, and cost optimization via autoscaling compute (scale-to-zero)

Skills & Technologies Used

Technical Proficiency Demonstrated

Primary Skills

- Cloud Platform Engineering (Azure) - Advanced

- Infrastructure as Code (IaC) - Advanced

- CI/CD Pipeline Engineering (Azure DevOps, YAML) - Advanced

- MLOps Platform Setup (Azure ML) - Advanced

- Cloud Security & RBAC - Intermediate-Advanced

- Cloud Cost Optimization (Autoscaling Compute) - Intermediate

Secondary Tools / Frameworks

- Azure CLI v2

- Azure ML CLI v2

- Git (version control)

- Linux (Ubuntu runners)

- YAML (Pipeline-as-Code)

Programming Languages

- PYTHON

- YAML configuration file (CI/CD Pipelines)

- Bash (CLI automation)

- GitHub CLI Commands

Cloud & DevOps Tools

Challenges & Outcomes

Technical challenges and resolutions

Key Technical Challenges

- Wiring Azure DevOps pipelines securely to Azure ML using Service Principals and RBAC without over-privileging

- Making infrastructure pipelines idempotent and re-runnable

- Managing Azure ML CLI v2 installation and versioning reliably inside ephemeral CI runners

- Designing autoscaling compute that balances performance with cost (scale-to-zero without breaking jobs)

- Ensuring consistent workspace context across CLI steps

How They Were Resolved

- Implemented RBAC with Service Principals (Contributor role) and scoped service connections

- Built existence checks and conditional creation into IaC pipelines (idempotent design)

- Pinned and installed Azure CLI + AML CLI v2 explicitly within the pipeline

- Configured Azure ML compute autoscaling (min=0, max=N)

- Centralized workspace and RG context configuration in the pipeline (CLI defaults)

Azure DevOps CI/CD - Architecture & YAML Mapping

Architecture to YAML construct mapping

| Architecture Block | YAML Construct |

|---|---|

| Azure Repos / GitHub | Trigger / pr |

| Azure Pipelines | Pipeline root, Stages |

| Linux Runner | pool: vmImage |

| Build/Provision Stage | stage → jobs → steps |

| Azure CLI Setup | AzureCLI@2 task |

| Azure ML CLI Setup | az extension add -n ml (AML CLI v2) |

| Azure Resource Group | az group create (IaC step) |

| Azure ML Workspace | az ml workspace create |

| Compute Cluster | az ml compute create |

| RBAC / Service Principal | azureSubscription: (Service Connection) |

| Environment Context (Dev/Prod) | variables: (env-specific YAML) |

| Manual Approval (Prod) | Environment Approvals (Azure DevOps Environments) |

| Idempotent Provisioning | Conditional checks in CLI steps |

| Observability / Logs | Azure DevOps Pipeline Logs |

Assets & References

Code, diagrams, study material

GitHub Repository

Source code repository containing the Azure ML IaC configuration and Azure DevOps pipelines.

Access Repository